Last Updated on July 26, 2013 by nghiaho12

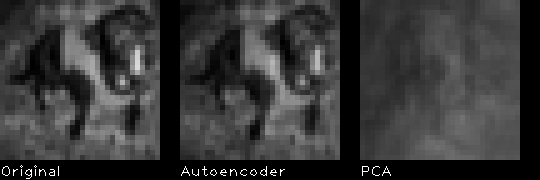

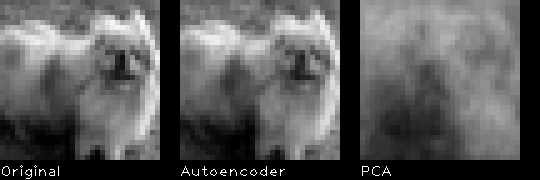

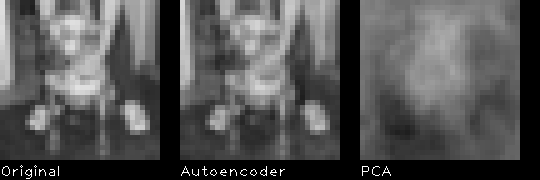

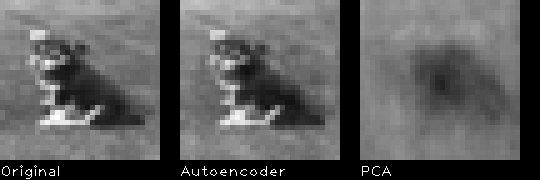

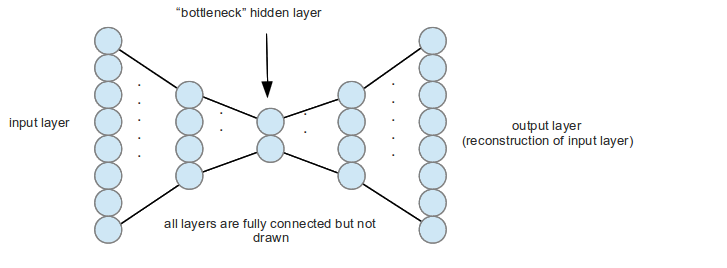

I’ve just finished the wonderful “Neural Networks for Machine Learning” course on Coursera and wanted to apply what I learnt (or what I think I learnt). One of the topic that I found fascinating was an autoencoder neural network. This is a type of neural network that can “compress” data similar to PCA. An example of the network topology is shown below.

The network is fully connected and symmetrical, but I’m too lazy to draw all the connections. Given some input data the network will try to reconstruct it as best as it can on the output. The ‘compression’ is controlled mainly by the middle bottleneck layer. The above example has 8 input neurons, which gets squashed to 4 then to 2. I will use the notation 8-4-2-4-8 to describe the above autoencoder networks.

An autoencoder has the potential to do a better job of PCA for dimensionality reduction, especially for visualisation since it is non-linear.

My autoencoder

I’ve implemented a simple autoencoder that uses RBM (restricted Boltzmann machine) to initialise the network to sensible weights and refine it further using standard backpropagation. I also added common improvements like momentum and early termination to speed up training.

I used the CIFAR-10 dataset to train 100 small images of dogs. The images are 32×32 (1024 vector) colour images, which I converted to grescale. The network I train on is:

1024-256-64-8-64-256-1024

The input, output and bottleneck are linear with the rest being sigmoid units. I expected this autoencoder to reconstruct the image better than PCA, because it has much more parameters. I’ll compare the results with PCA using the first 8 principal components.

Results

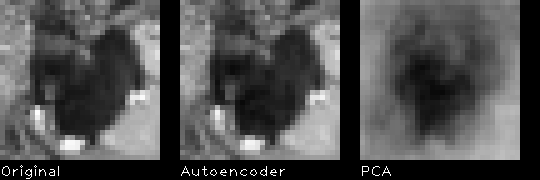

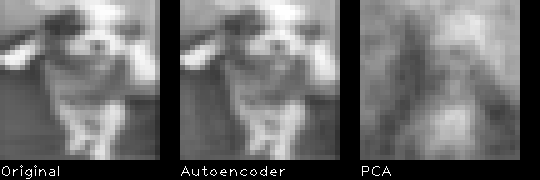

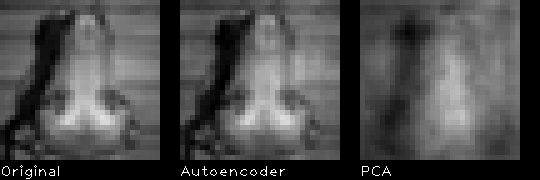

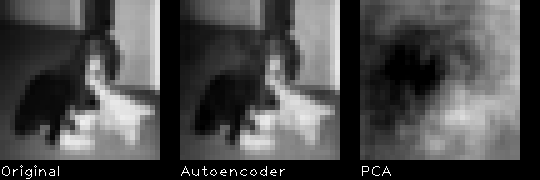

Here are 10 random results from the 100 images I trained on.

The autoencoder does indeed give a better reconstruction than PCA. This gives me confidence that my implementation is somewhat correct.

The RMSE (root mean squared error) for the autoencoder is 9.298, where as for PCA it is 30.716, pixel values range from [0,255].

All the parameters used can be found in the code.

Download

You can download the code here

Last update: 27/07/2013

You’ll need the following libraries installed

- Armadillo (http://arma.sourceforge.net)

- OpenBLAS (or any other BLAS alternative, but you’ll need to edit the Makefile/Codeblocks project)

- OpenCV (for display)

On Ubuntu 12.10 I use the OpenBLAS package in the repo. Use the latest Armadillo from the website if the Ubuntu one doesn’t work, I use some newer function introduced recently. I recommend using OpenBLAS over Atlas with Armadillo on Ubuntu 12.10, because multi-core support works straight out of the box. This provides a big speed up.

You’ll also need the dataset http://www.cs.toronto.edu/~kriz/cifar-10-binary.tar.gz

Edit main.cpp and change DATASET_FILE to point to your CIFAR dataset path. Compile via make or using CodeBlocks.

All parameter variables can be found in main.cpp near the top of the file.

Greate job!

Great work Nghia Ho. How can i apply these autoencoders on traffic classification…?

Traffic signs?

network traffic

i’m implementing auto-encoders to reduce the dimensionality of network traffic features. I have gone through the following link form Stanford.

http://ufldl.stanford.edu/wiki/index.php/UFLDL_Tutorial

Hmm not sure on that. You might be better of using a standard machine learning algorithm rather than use an autoencoder. The autoencoder is still a bit mysterious to me, in terms of making good use of it.

thank you very much for your reply..! 🙂

Nice tutorial, I’m trying to implement AutoEncoder in python. I have doubt that do we have to initialize symmetric network while training or can we simulate the symmetry with half of them; first doing a forward pass and then doing a backward pass for reconstruction? But if I use only half the layers I will get trouble with bias units since they are not connected backwards, am I correct? Please tell me the standard way to do it. Thank you….

According to the neural network course I took on Coursera it seems the standard practice is to

1. train half the network using RBM.

2. mirror the network

3. refine the whole network using standard backpropagation

hello, after testing the code, I found the result of PCA is really good:

PCA rmse result:

2 3.65024

4 2.69235

8 1.74461

16 0.723931

32 0.0409302

64 1.07965e-14

128 1.08029e-14

256 1.08034e-14

512 1.08034e-14

reduce dim to 8 gets RMSE=1.74461, not 30.716 you mentioned.

so for my test, the result of PCA is better than autoencoder.

I think maybe there is something wrong with my test?

Can you do a reconstruction with 2 components and see what it looks like visually.

Thank you for this nice tutorial! The reconstructed images you show look remarkably good. May I ask if they were among the training set? If yes, it would be interesting to see some reconstructions of unseen dog-images.

The images were among the training set. I suspect the reconstruction is quite good because my training set is small compared to the number of parameters in the autoencoder.

Thank you for this nice tutorial, I am trying to build classifier using RBM, I am unable to reuse the Trained RBM. I saved rbms[i].m_weights, rbms[i].m_visible_biases, rbms[i].m_hidden_biases for reuse. reusing these three alone gives very high reconstruction error. For saving trained RBM what else values are impotent?

And How to save and load Vector of RBM Objects?

The AutoEncoder class has save/load functions. Uncomment out line 121 to save.

i did not find load/save methods in AutoEncoder class. I am using RBM_Autoencoder-0.1.2.tar.gz. It have SetRBMHalf, BackPropagation, FeedForward, Encode, PrintSize methods only. it did not have load/save.

Download again. Seems like I didn’t include it.

Hi,

I am writing an article about the use of deeplearning in aerial imagery segmentation, and I would like to use your autoencoder scheme and link to your article.

Are you ok with that?

Sure, go for it.