Last Updated on December 26, 2011 by nghiaho12

So it’s Boxing Day and I haven’t got much on, so why not another blog post! yay!

Today’s post is about generating synthetic views of planar objects, such as a book. I needed to do whilst implementing my own version of the Fern algorithm. Here are some references, for your reference …

- M. Ozuysal, M. Calonder, V. Lepetit and P. Fua, Fast Keypoint Recognition using Random Ferns, IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 32, Nr. 3, pp. 448 – 461, March 2010.

- M. Ozuysal, P. Fua and V. Lepetit, Fast Keypoint Recognition in Ten Lines of Code, Conference on Computer Vision and Pattern Recognition, Minneapolis, MI, June 2007.

Also check out their website here.

In summary it’s a cool technique for feature matching that is rotation, scale, lighting, and affine viewpoint invariant, much like SIFT, but does it in a simpler way. Fern does this by generating lots of random synthetic views of the planar object and learns (semi-naive Bayes) the features extracted at each view. Because it has seen virtually every view possible, the feature descriptor can be very simple and does not need to be invariant to all the properties mentioned earlier. In fact, the feature descriptor is made up of random binary comparisons. This is in contrast to the more complex SIFT descriptor that has to cater for all sorts of invariance.

I wrote a non-random planar view generator, which is much easier to interpret from a geometric point of view. I find the idea of random affine transformations tricky to interpret, since they’re a combination of translation/rotation/scalings/shearing. My version treats the planar object as a flat piece of paper in 3D space (with z=0), applies a 3×3 rotation matrix (parameterise by yaw/pitch/roll), then re-projects to 2D using an orthographic projection/affine camera (by keeping x,y and ignoring z). I do this process for all combinations of scaling, yaw, pitch, roll I’m interested in.

I use the following settings for Fern

- scaling of 1.0, 0.5, 0.25

- yaw, 0 to 60 degrees, increments of 10 degrees

- pitch, 0 to 60 degrees, increments of 10 degrees

- roll, 0 to 360 degrees, increments of 10 degrees

There’s not much point going beyond 60 degrees for yaw/pitch, you can hardly see the object.

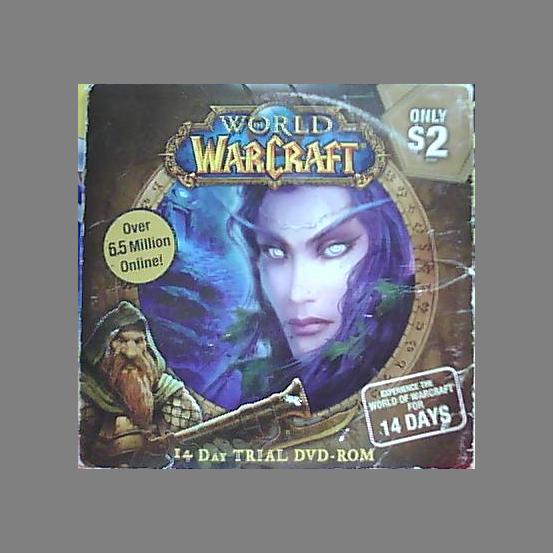

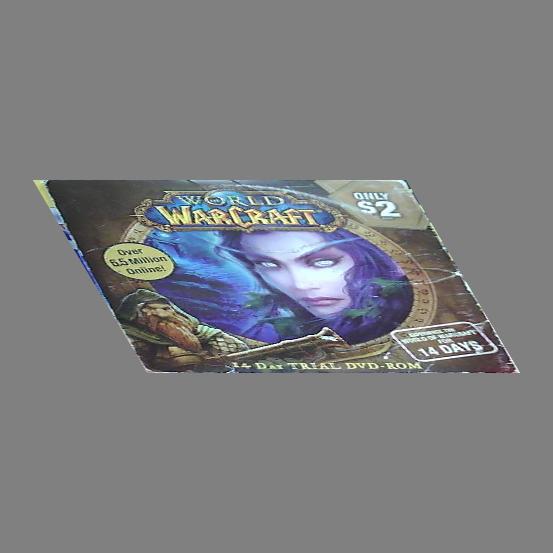

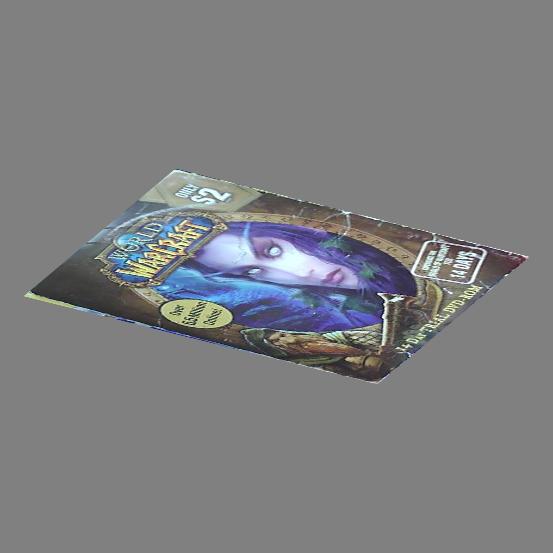

Here are some example outputs

Download

You can download my demo code here SyntheticPlanarView.tar.gz

You will need OpenCV 2.x installed, and compile using the included CodeBlocks project or an IDE of your choice. Run it on the command line with an image as the argument. Hit any key to step through all the view generated.

Hi,

Thank you for this blog and all the nice work you share.

I notice in this code that you compute the rotation matrix has a product of three multiplications along the three world axis. Couldn’t you use the cv::Rodrigues function from opencv to do the same ?

Hi,

I could certainly have used cv::Rodrigues, just didn’t occur to me at the time 🙂

Thank you for you answer :).

I’m extensively testing your code and I’m having some trouble with the transformations provided. For example if you just apply a yaw rotation of say 60 degrees, the image size is changed but its shape is still rectangular. According to the law of perspective it should be a trapezoid.

Indeed your code uses a affine transform, should not it be a projective transform ?

The affine is a good enough approximation of the perspective transform if we assume the object is far away enough from the camera such that there is little perspective distortion, otherwise called “weak persepctive”. You can in theory use the projective transform but then there’s more parameters to permutate (eg. object distance from camera).

Thank you for your answer. Don’t you implicitly compute the distance object/camera through the scale change ? I guess the perspective transform allows putting all together.

I will try to compute the full projective transform, any lead on how to do it ? Or a link to a good computer vision course ?

You should be able to modify my WarpImage function. There is a 4×4 T matrix that you can use to multiply your 3D point (setting z to some value other than zero), then convert to 2D via

x’ = x/z

y’ = y/z

z’ = z/z = 1

Keep in mind that (0,0) will be the centre of the image. Multipling it by a 3×3 K camera matrix will transform the points to normal image points.

How is a 4×4 matrix supposed to bring a relationship between an homogeneous 3D point (dimension 4) and an homogeneous 2D point (dimension 3) ? Shouldn’t the matrix be of size 3×4 ? Should I remove the last row from T ?

It’s a standard 3D to 2D projection equation found in computer graphics. Given

T is a 4×4 matrix

K is a 3×3 camera matrix

X1 is a 4×1 homogenous point

X2 is a 3×1 homogenous point

X3 is a 3×1 homogenous point

the equation is

pt3d = T*X1

dividing by depth z

X2.x = pt3d.x / pt3d.z

X2.y = pt3d.y / pt3d.z

X2.z = 1

X3 = K*X2

screen.x = X3.x

screen.y = X3.y

Thank you very much for your answers. I’ll let you know if I manage to get good results.